My homelab was running on a single dell r210. It had pfsense and few VMs, all the self-hosted apps were deployed using docker. Things were running fine for more than a year but i was missing high-availability, since i had only one server. I wanted my services to be available always! To achieve high availability on proxmox you need 3 servers minimum. Thus the hunt for 2 more began!

Hardware Upgrades

Many say olx is a bad place and full of scammers. But i never thought i would find good and genuine deals there, especially for servers. Back in september 2020, i came across a deal for Dell R520 server with dual hex-core processor and 128GB of memory for 35k! The deal was posted by IT manager of a small firm in bangalore, and seems they were upgrading. I got 2 Dell R520 with 64GB of RAM and dual hex-core processor for 65k! 64gigs is more than sufficient for me. Thanks to a friend in bangalore, i could get these servers and door deliver it to me free of cost 😉

The server had fiber channel cards (seems they were using some SAN storage arrays), dual power supply, dual RJ45 network adapters and iDRAC v7 card. Such a sweet deal for the money. The one thing it did not have was HDD caddy. I was planning to put 5 x 2 TB HDD and make RAIDz2, so I had to shell out some extra money to buy these caddy.

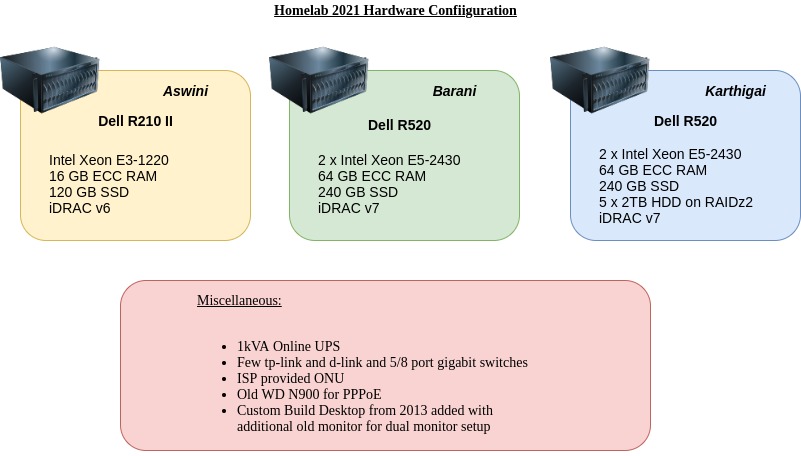

My current homelab has the following hardware, juicing around 500W and consuming more than 200 units of electricity per month 😑 I sold the small WD MyCloud EX2 NAS since i am having plenty of drive space in my R520 and proxmox has native zfs support.

The Rack

I enquired massrack for a 17 to 22U server rack. They told they had a 17U 1000mm depth rack which would cost around 15k. I was not interested to spend it at that time since i had already spent a lot on servers, and decided to create a own rack since my uncle had a pretty laser cutting machine in his factory. The output was somewhat ok, but the main problem was i could not get it closed due to alignment issues and there is a slight mismatch with square holes on rails. I guess i would buy a proper server rack late this year.

High Availability (HA) Dilemma

Setting up a 3 node proxmox cluster is pretty stright-forward and well documented. So i quickly set-up a 3 node proxmox cluster with my 2 new servers, and time to dig into HA.

Intially i had set up my services in a VM and made it as highly available VM in proxmox. But there was delay since the VM disk has to be moved from one node to another during failover. Then i stumbled upon docker-swarm which seems to check all the requirements. But the nerds in reddit were advising to go with kubernetes instead of docker-swarm.

I checked a few tutorials and blogs (mentioned in reference below) and decided to go with k3s, a lightweight version of k8s created by rancher labs.

First i installed rancher-ui and tried to install apps through gui. It was very confusing and i prefer yaml like in docker-compose. So i deleted rancher installation and started creating apps from plain manifests. Somehow i didn’t like helm charts also. Creating a manifest from scratch allows me to customise everything, helm charts allow customisation but, i prefer plain manifests.

Kubernetes was very strange for about a month, and those manifest files looked alien to me at first. But after trying it out and deploying more apps, i realised k8s was the way to go! If one day i decide to sell my homelab hardware due to some reasons, i can still put my apps on cloud with same manifest files (small modifications might be required) in minimal time, as almost all cloud providers have support for k8s engine.

Now you delete a pod in k3s, it instantly spins another one. A node turns off! no problem, all the services in the failed node are started on available nodes with minimum downtime. The main issue with proxmox HA VM was not the downtime, but the reliability of re-starting services in another node when one fails. Also i use longhorn for persistant storage, which uses free space in all nodes and aggregates them into usable space for persistant-volumes.

Dual WAN and pfSense

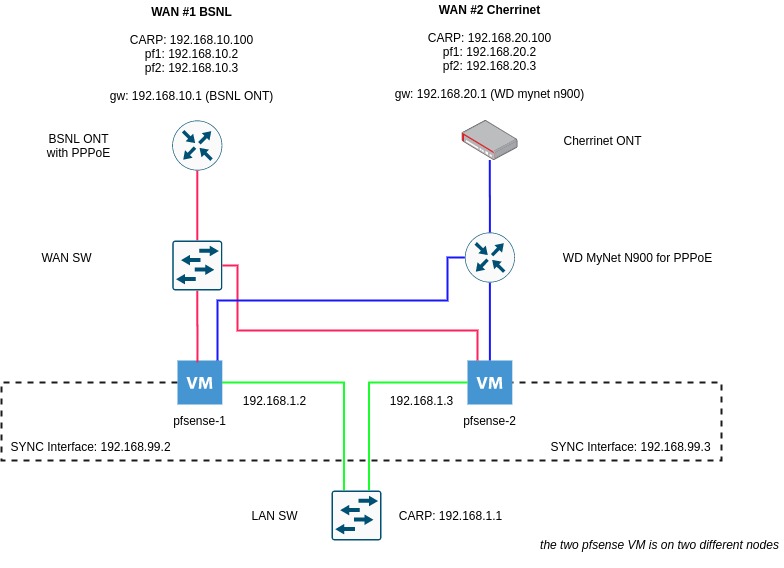

I already have a 100 Mbps FTTH from cherrinet. Since it had downtime due to cable cut issues (not often but 3-4 times a month) i wanted a backup connection. Luckily BSNL was also providing FTTH in my area. So i decided to get it and set-up dual wan in pfsesne. I downgraded cherrinet plan to 50mbps and BSNL connection is 60mbps. After some tinkering i got the dual wan working in my pfsense. It was not easy as i expected 😏 Here is how mine works. You can read more on CARP and dual WAN setup in netgate official docs, they have got detailed explanation.

Current State

This is my current state as of writing this blog post. I use nginx and metallb for ingress, longhorn for storage.

Upgrades and ToDo

There is always something to change and something to upgrade

Apps and Services

Have to find out a way to archive all my mailboxes. Need to setup webtrees for family. And anything interesting from r/selfhosted

Backups

As of now i have not configured proper backups for persistant volumes. I am looking setting up minio or direct nfs backup to my zfs pool from inside longhorn. Also for my personal files need to automate my manual backup strategy and push important files to cloud.

Gigabit switch

I had a dell powerconnect 16 port switch. The power supply (5V, 8A) on it failed and i purchased a after-market one from amazon, which worked fine for few months. At present it is not working. Need to upgrade to cisco/hp/dell 16-24 port all gigabit switch. Still looking in olx sometimes 😄

UPS

The 1kVA ups which i have is currently the only single point of failure 😨. Also the backup time is less than 30 minutes. Need to get one more 1kVA UPS and use my additional PSU in R520. Also looking at solar setup 😉 let us see

Rack

Need to get proper server rack for enclosing the servers and make some arrangement to vent out the hot air from backside. Also need to put blanking panels to avoid hot and cold air mixing.

Desktop

Last one on the list, looking at AMD custom builds. This will take some time…

Reference

Some sites, videos which i fould very useful during building my homelab. (ofcourse there is reddit for everything else)